Image by , via Wikimedia Commons. Licensed CC0 |

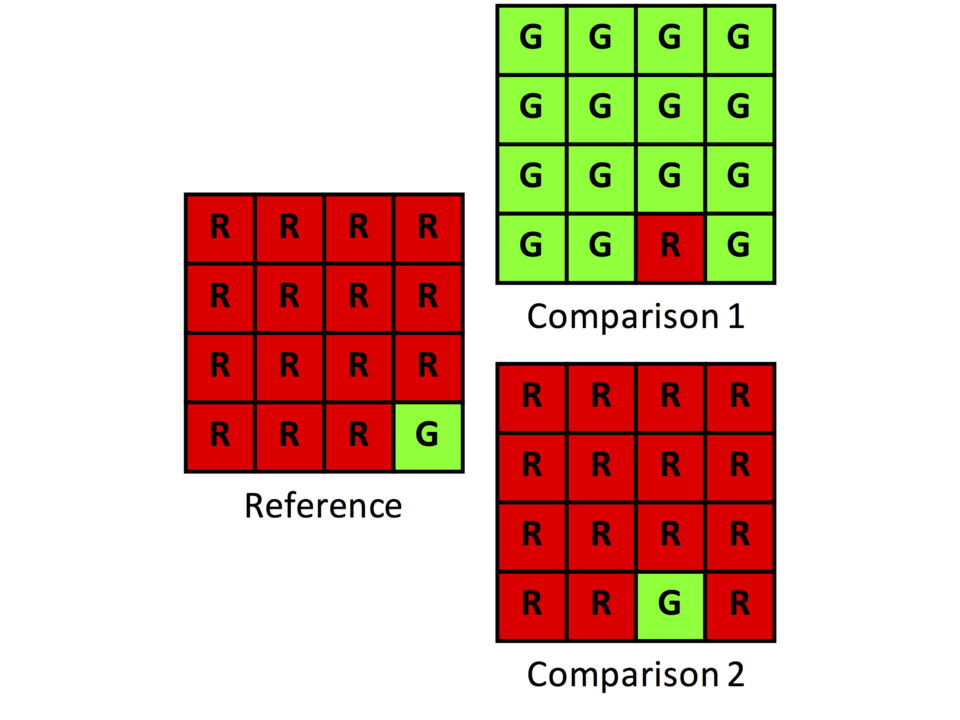

Joanne GarrettCohen's kappa Cohen's kappa Statistic measuring inter-rater agreement for categorical items Cohen's kappa coefficient (symbol κ, lowercase Greek kappa) is a statistic used to measure inter-rater reliability for qualitative or categorical data.[1] It is generally thought to be a more robust measure than simple percent agreement calculation, as κ incorporates the possibility of the agreement occurring by chance. There is controversy surrounding Cohen's kappa due to the difficulty in interpreting indices of agreement. Some researchers have suggested that it is conceptually simpler to evaluate disagreement between items.[2] Cohen's kappa coefficient ranges from -1 (complete disagreement) to 1 (complete agreement).[3] History The first mention of a kappa-like statistic is attributed to Galton in 1892.[4][5] The seminal paper introducing kappa as a… (Source: Wikipedia)

|